Wiz Research has recently uncovered a significant security vulnerability involving DeepSeek, a Chinese AI startup known for its innovative AI models.

The exposed database, a ClickHouse instance, was found to be publicly accessible without any authentication, allowing unauthorized access to sensitive internal data. This incident raises serious concerns about the security practices surrounding rapidly adopted AI technologies.

What DeepSeek Data Exposed?

- Secret Keys

- Plain-text chat messages

- Backend Details

- Internal Secrets and Logs

- Over 1 million log entries

ClickHouse is an open-source, columnar database management system designed for fast analytical queries on large datasets. It was developed by Yandex and is widely used for real-time data processing, log storage, and big data analytics.

Key Points of the Incident

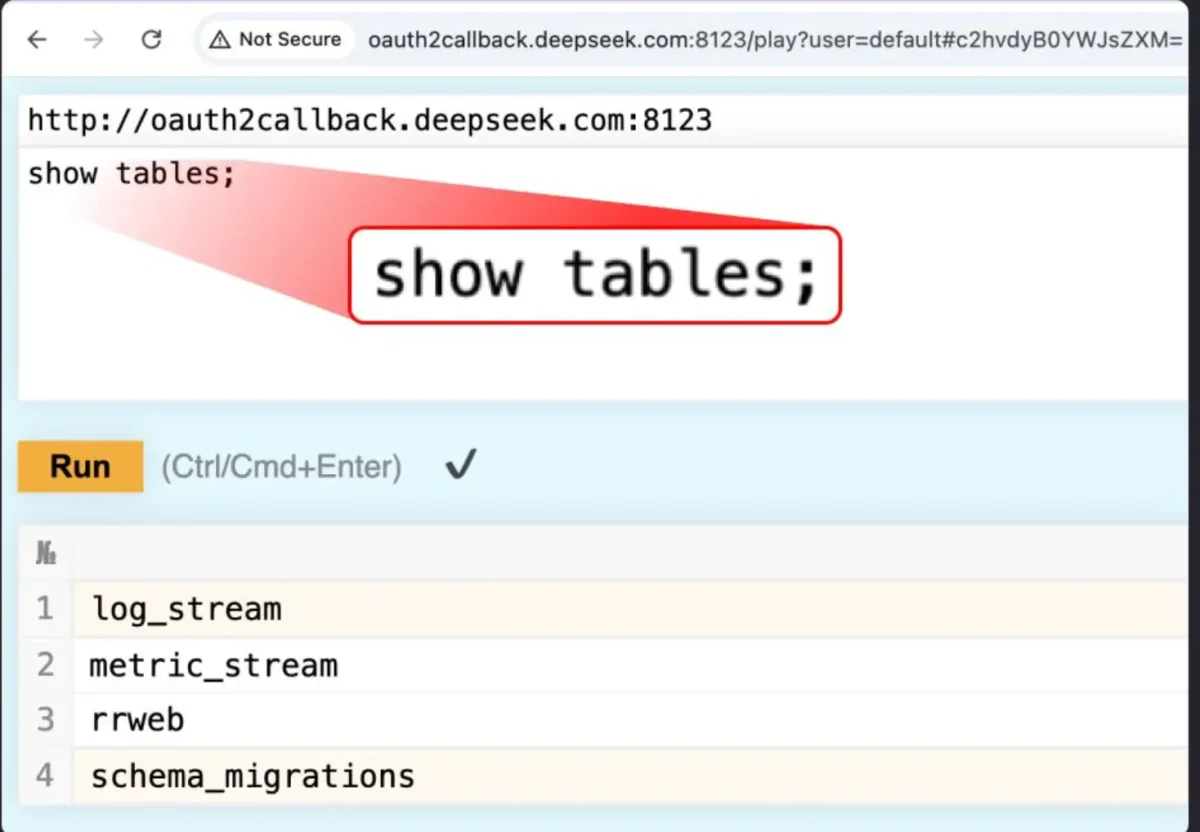

- Database Exposure: The ClickHouse database was hosted at oauth2callback.deepseek.com:9000 and dev.deepseek.com:9000, exposing over one million log entries, including chat histories, API keys, and backend operational details.

- Full Control Access: The exposure allowed for full control over database operations, including executing arbitrary SQL queries without any authentication, posing a severe risk of data exfiltration and privilege escalation.

- Sensitive Information: The log_stream table contained plaintext logs that revealed critical information such as chat histories and API secrets, which could be exploited by malicious actors.

Wiz research detected two unusual, open ports (8123 & 9000) associated with the following hosts:

- http://oauth2callback.deepseek.com:8123

- http://dev.deepseek.com:8123

- http://oauth2callback.deepseek.com:9000

- http://dev.deepseek.com:9000

Upon further investigation, these ports led to a publicly exposed ClickHouse database, accessible without any authentication at all – immediately raising red flags.

The vulnerability has been fixed by DeepSeek team.

Once we discovered the exposure, we promptly reported it to the DeepSeek team. Who promptly restricted public access and took the database off the internet, Wiz added.

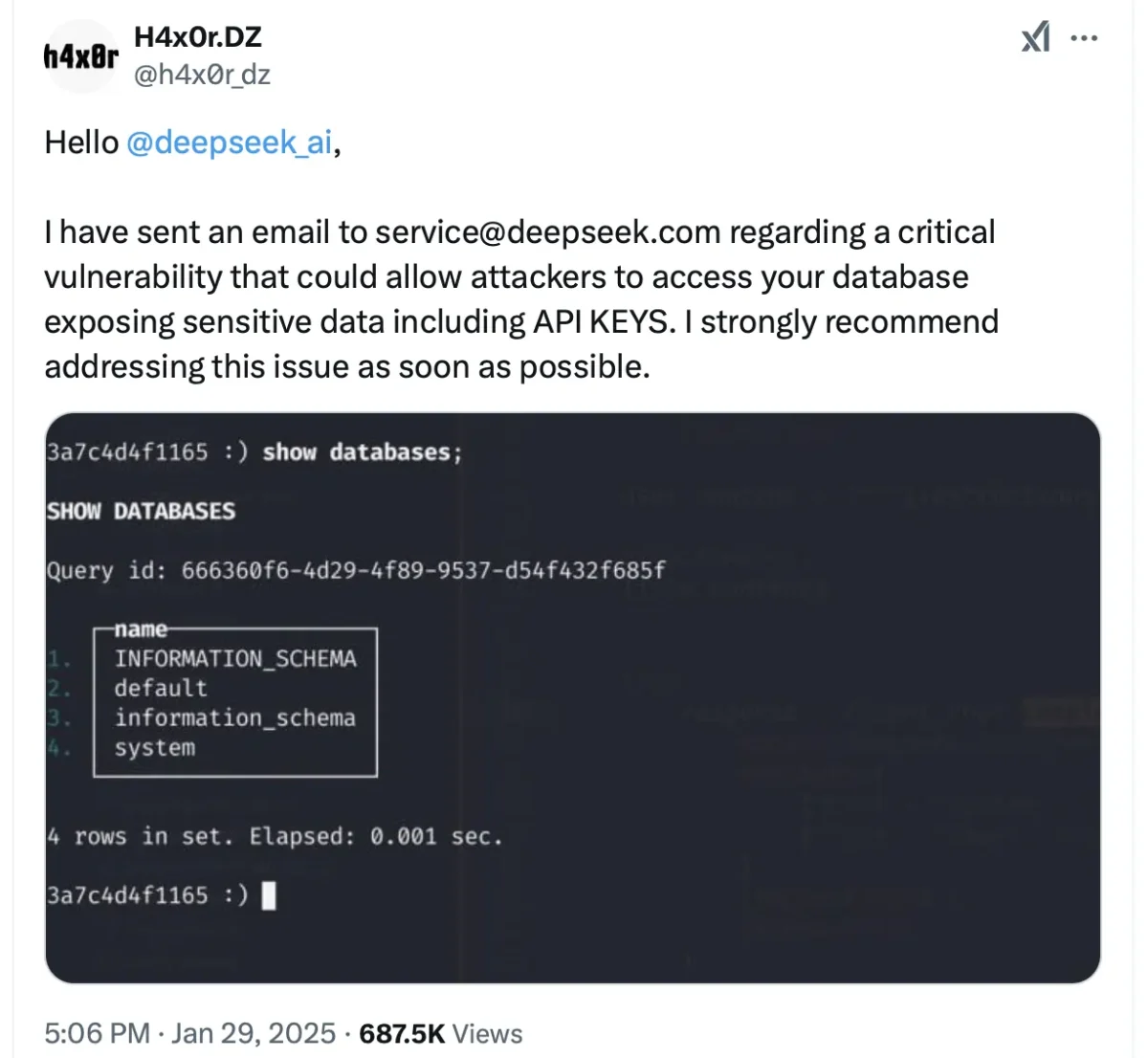

One of the security researcher also submit a vulnerability to DeepSeek on X platform.

Researcher Updated DeepSeek AI has fixed this issue.

Impact on the Industry

- The DeepSeek incident underscores several broader implications for the tech industry:

- Security Oversight in AI Adoption: As organizations increasingly adopt AI solutions from emerging startups, the lack of robust security measures can lead to significant vulnerabilities. This incident highlights that many companies prioritize innovation over security.

- Risk of Data Breaches: With sensitive data being entrusted to AI service providers, the risk of data breaches becomes more pronounced. Companies must ensure that their infrastructure is secure to protect customer data effectively.

- Need for Improved Security Frameworks: The rapid integration of AI technologies into business processes necessitates the establishment of security frameworks that match those employed by established cloud providers and infrastructure services.

Recommendations for Companies

In light of the DeepSeek exposure, companies should take proactive measures to enhance their security posture:

- Conduct Regular Security Audits: Organizations should perform routine assessments of their external attack surfaces to identify and remediate potential vulnerabilities before they can be exploited.

- Implement Strong Authentication Protocols: All databases and sensitive systems should require robust authentication methods to prevent unauthorized access.

- Educate Teams on Security Best Practices: It is crucial for security teams to collaborate closely with AI engineers to ensure comprehensive visibility into the architecture and tooling used in AI applications.

- Prioritize Data Protection: Companies must prioritize safeguarding customer data as they adopt new technologies, ensuring that security measures are not an afterthought but an integral part of their operational strategy.

The exposure of DeepSeek’s database serves as a stark reminder of the inherent risks associated with rapid technological adoption in the AI sector.

Challenges

Whenever a company launches a new product, it often faces a familiar pattern of challenges, similar to those encountered during the launches of ChatGPT and Google Gemini. Each product introduction comes with its own set of initiatives and hurdles that must be navigated.

As these products enter the market, they will evolve daily, improving their services to become more accurate and user-friendly. This ongoing refinement process is crucial for maintaining competitiveness in an ever-changing landscape.

As businesses increasingly rely on AI solutions, they must also reinforce their security practices to protect sensitive information adequately. The industry must learn from this incident to foster a culture of security that keeps pace with innovation.