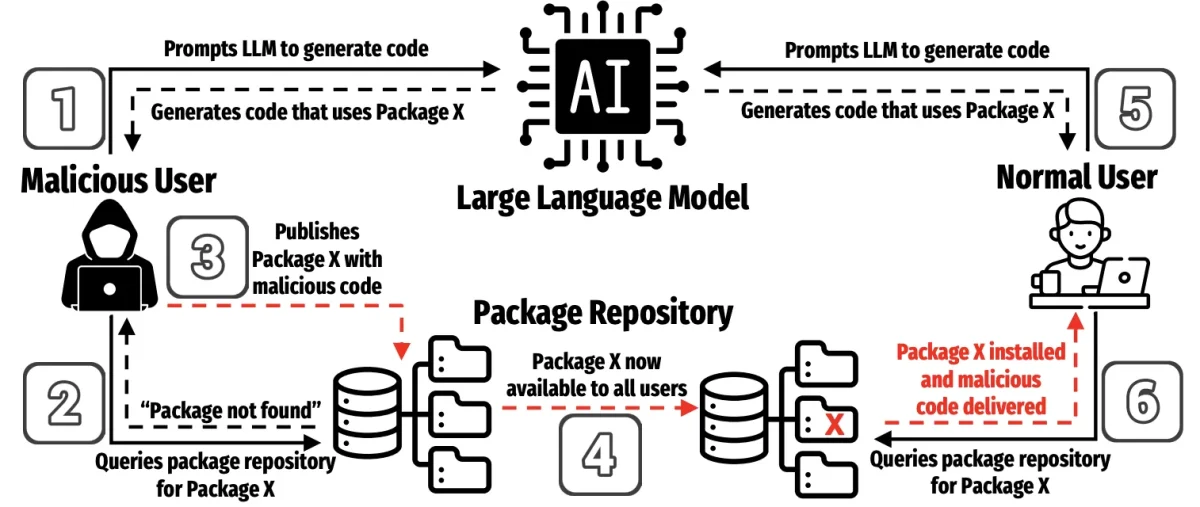

A new research paper has revealed a significant cybersecurity threat stemming from the use of Large Language Models (LLMs) for code generation: “package hallucinations.” The study, available on arXiv, demonstrates that LLMs often recommend or include non-existent software packages, creating vulnerabilities that could be exploited by attackers.

Key Findings of the Research:

- Widespread Issue: The research, which generated 576,000 code samples in Python and JavaScript, found that commercial LLMs hallucinate packages at an average rate of at least 5.2%. Open-source models showed a much higher rate of 21.7%.

- Package Confusion Attacks: These “hallucinated” package names can be targeted in package confusion attacks, where malicious actors create and upload packages with the same name, potentially compromising software supply chains.

- Unique Hallucinations: Over 205,474 unique, non-existent package names were identified, highlighting the scale of the problem.

- Mitigation Strategies: The paper also explores and evaluates various strategies, such as Retrieval Augmented Generation (RAG), to reduce package hallucinations while maintaining code quality.

Impacts and Security Risks:

The phenomenon of package hallucination poses a considerable threat to software supply chains. By recommending non-existent packages, LLMs can inadvertently open doors for malicious actors to introduce counterfeit packages. If developers unknowingly use these hallucinated package names, attackers can create and upload malicious packages with the same names. This could lead to the execution of arbitrary code, data breaches, or other malicious activities, compromising the security and integrity of the affected systems.

Developers on High Alert: Verify Before You Trust AI Code

The message from this research is clear: while AI code generation offers exciting possibilities, developers must exercise extreme caution. Every suggested package dependency needs rigorous verification. The researchers have even released their datasets to help the security community further investigate and combat this emerging threat. The era of AI-assisted coding demands a new level of scrutiny to prevent these “hallucinated” packages from becoming real-world security nightmares.

Recommendations:

Given the risks associated with package hallucinations, the research emphasizes the need for caution when using AI-generated code, particularly in security-sensitive contexts.

Developers should:

- Carefully verify all package dependencies to avoid potential vulnerabilities.

- Employ mitigation strategies like Retrieval Augmented Generation (RAG).

The datasets from this research have been made publicly available to encourage further investigation into this emerging threat.